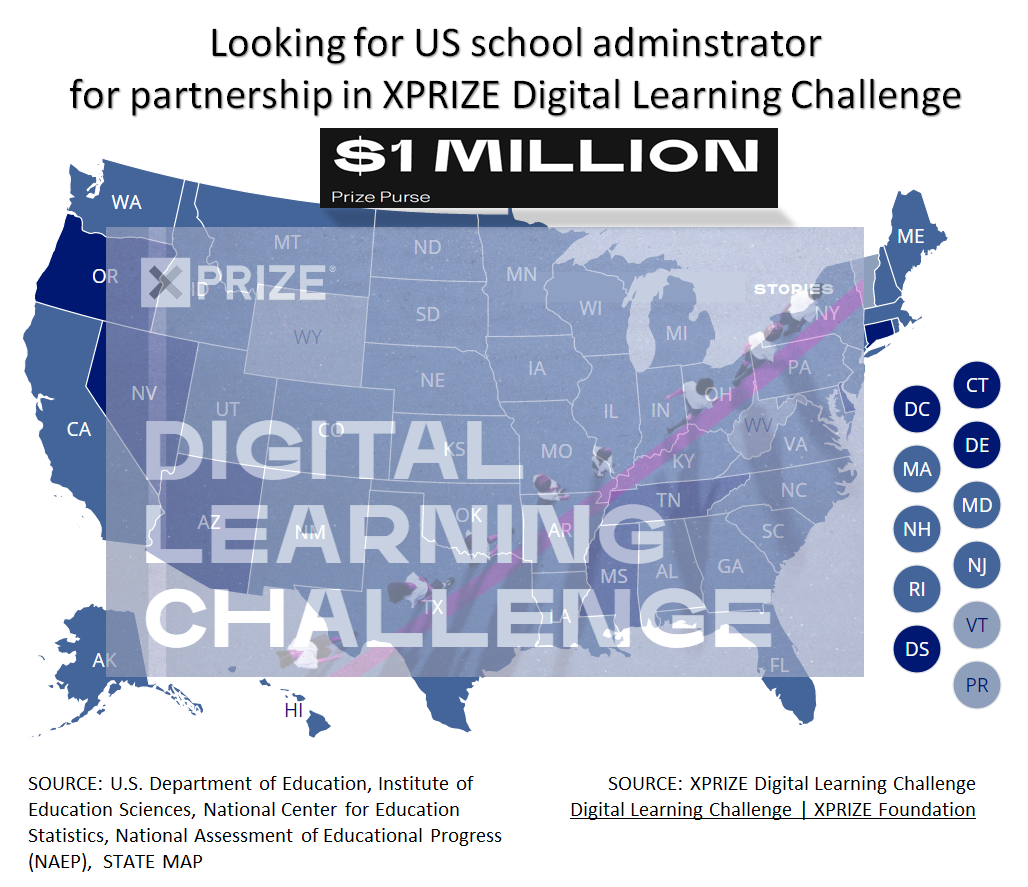

Advertisement

Advertisement

How do you like the idea of NOT saying " Oh I forgot, let me get back to you. "

Advertisement

INVBAT.COM - AI revolutionizing education and how to remember using augmented intelligence.

Try typing or saying I need the quadratic formula

Try typing or saying get me the quadratic formula

Try typing or saying show me the quadratic formula

ASK WHAT YOU NEED. TRY I NEED QUADRATIC EQUATION

AI Natural Language Query makes one click search now possible

Enter your access code above or ask what you need

INVBAT.COM - A.I. + CHATBOT voice search for formula , calculator, reviewer, work procedure and frequently asked questions (FAQ). It is useful immediately and on demand using your smartphone, notebook, tablet, laptop or desktop computer. Helping you to learn faster and 98% never forget.

INVBAT.COM - AI + CHATBOT is a personalized natural language search and information retrieval augmented intelligence service provider. We deliver immediate usefulness at affordable cost to our subscriber such as students, teachers, parents, and employees to help them remember what they stored in the cloud in one or fewer click using their smartphone, tablet, laptop, desktop computer, and smart tv.

Advertising rate $375 per year

Additional bonus if your school , community college, and university advertise, all your students and teachers will get free personal memory assistant chatbot for one month.

Advertise on this webpage. Use PayPal

After payment e-mail admin@invbat.com your advertising website link or your You Tube link and we will insert them on this webpage.

# comment : Given: I have 10 sample points from sensor measurement. Use the f(x) = x * np.sin(x) equation to approximate

# the normal operation of the apparatus. Using 10 sample estimator point and 97% confidence prediction interval

# Author : Apolinario (Sam) Ortega - founder of invbat.com-A.I + chatbot <admin@invbat.com)

# Date created: 6/13/2020

# license : BSD 3 clause

# Citation: scikit-learn.org sample gallery

print(__doc__)

print('')

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import GradientBoostingRegressor

np.random.seed(1)

def f(x):

"""The function to predict."""

return x * np.sin(x)

#----------------------------------------------------------------------

# First the noiseless case

# sample point low = 0, high = 10 , sample data points = 10

X = np.atleast_2d(np.random.uniform(0, 10.0, size=10)).T

X = X.astype(np.float32)

# Observations

y = f(X).ravel()

dy = 1.5 + 1.0 * np.random.random(y.shape)

noise = np.random.normal(0, dy)

y += noise

y = y.astype(np.float32)

# Mesh the input space for evaluations of the real function, the prediction and

# its MSE

# sample point low = 0, high = 10 , sample data points use by predictor equation = 10

xx = np.atleast_2d(np.linspace(0, 10, 10)).T

xx = xx.astype(np.float32)

alpha = 0.97

clf = GradientBoostingRegressor(loss='quantile', alpha=alpha,

n_estimators=250, max_depth=3,

learning_rate=.1, min_samples_leaf=9,

min_samples_split=9)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_upper = clf.predict(xx)

clf.set_params(alpha=1.0 - alpha)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_lower = clf.predict(xx)

clf.set_params(loss='ls')

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_pred = clf.predict(xx)

# Plot the function, the prediction and the 97% confidence interval based on

# the MSE

fig = plt.figure(figsize=(12,8))

plt.plot(xx, f(xx), 'g:', label=r'$f(x) = x\,\sin(x)$')

plt.plot(X, y, 'b.', markersize=10, label=u'Observations')

plt.plot(xx, y_pred, 'r-', label=u'Prediction')

plt.plot(xx, y_upper, 'k-')

plt.plot(xx, y_lower, 'k-')

plt.fill(np.concatenate([xx, xx[::-1]]),

np.concatenate([y_upper, y_lower[::-1]]),

alpha=.3, fc='b', ec='None', label='97% prediction interval')

plt.title('Given 10 observation, 10 predictor point; prediction confidence is 97%', size=14)

plt.xlabel('$x$', size =12)

plt.ylabel('$f(x)$', size=12)

plt.ylim(-10, 20)

plt.legend(loc='upper right')

plt.show()

# comment # Do shift + enter

# comment : wait to see the output

# comment : Use the f(x) = x * np.sin(x) equation to approximate the normal operation of the apparatus.

# comment :

# comment : Given: I have 100 sample points from sensor measurement. Use the f(x) = x * np.sin(x) equation to approximate

# the normal operation of the apparatus. Using 100 sample estimator point and 97% confidence prediction interval

# and max_depth = 3

# Author : Apolinario (Sam) Ortega - founder of invbat.com-A.I + chatbot <admin@invbat.com)

# Date created: 6/13/2020

# license : BSD 3 clause

# Citation: scikit-learn.org sample gallery

print(__doc__)

print('')

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import GradientBoostingRegressor

np.random.seed(1)

def f(x):

"""The function to predict."""

return x * np.sin(x)

#----------------------------------------------------------------------

# First the noiseless case

# sample point low = 0, high = 10 , sample data points = 100

X = np.atleast_2d(np.random.uniform(0, 10.0, size=100)).T

X = X.astype(np.float32)

# Observations

y = f(X).ravel()

dy = 1.5 + 1.0 * np.random.random(y.shape)

noise = np.random.normal(0, dy)

y += noise

y = y.astype(np.float32)

# Mesh the input space for evaluations of the real function, the prediction and

# its MSE

# sample point low = 0, high = 10 , sample data points use by predictor equation = 100

xx = np.atleast_2d(np.linspace(0, 10, 100)).T

xx = xx.astype(np.float32)

alpha = 0.97

clf = GradientBoostingRegressor(loss='quantile', alpha=alpha,

n_estimators=250, max_depth=3,

learning_rate=.1, min_samples_leaf=9,

min_samples_split=9)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_upper = clf.predict(xx)

clf.set_params(alpha=1.0 - alpha)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_lower = clf.predict(xx)

clf.set_params(loss='ls')

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_pred = clf.predict(xx)

# Plot the function, the prediction and the 97% confidence interval based on

# the MSE

fig = plt.figure(figsize=(12,8))

plt.plot(xx, f(xx), 'g:', label=r'$f(x) = x\,\sin(x)$')

plt.plot(X, y, 'b.', markersize=10, label=u'Observations')

plt.plot(xx, y_pred, 'r-', label=u'Prediction')

plt.plot(xx, y_upper, 'k-')

plt.plot(xx, y_lower, 'k-')

plt.fill(np.concatenate([xx, xx[::-1]]),

np.concatenate([y_upper, y_lower[::-1]]),

alpha=.3, fc='b', ec='None', label='97% prediction interval')

plt.title('Given 100 observation, 100 predictor point; prediction confidence is 97%', size=14)

plt.xlabel('$x$', size =12)

plt.ylabel('$f(x)$', size=12)

plt.ylim(-10, 20)

plt.legend(loc='upper right')

plt.show()

# comment # Do shift + enter

# comment : wait to see the output

# comment : Use the f(x) = x * np.sin(x) equation to approximate the normal operation of the apparatus.

# comment : Below the GradientBoostingRegressor(max_depth=3). I know from my previous experience that setting the

# comment : max_depth = 6 improves the accuracy prediction bandwidth interval. Next iteration

# comment : Given: I have 100 sample points from sensor measurement. Use the f(x) = x * np.sin(x) equation to approximate

# the normal operation of the apparatus. Using 100 sample estimator point

# Author : Apolinario (Sam) Ortega - founder of invbat.com-A.I + chatbot <admin@invbat.com)

# Date created: 6/13/2020

# license : BSD 3 clause

# Citation: scikit-learn.org sample gallery

print(__doc__)

print('')

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import GradientBoostingRegressor

np.random.seed(1)

def f(x):

"""The function to predict."""

return x * np.sin(x)

#----------------------------------------------------------------------

# First the noiseless case

# sample point low = 0, high = 10 , sample data points = 100

X = np.atleast_2d(np.random.uniform(0, 10.0, size=100)).T

X = X.astype(np.float32)

# Observations

y = f(X).ravel()

dy = 1.5 + 1.0 * np.random.random(y.shape)

noise = np.random.normal(0, dy)

y += noise

y = y.astype(np.float32)

# Mesh the input space for evaluations of the real function, the prediction and

# its MSE

# sample point low = 0, high = 10 , sample data points use by predictor equation = 100

xx = np.atleast_2d(np.linspace(0, 10, 100)).T

xx = xx.astype(np.float32)

alpha = 0.97

# comment : previously the max_depth setting = 3, I changed it to 6 to see the effect on prediction interval

clf = GradientBoostingRegressor(loss='quantile', alpha=alpha,

n_estimators=250, max_depth=6,

learning_rate=.1, min_samples_leaf=9,

min_samples_split=9)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_upper = clf.predict(xx)

clf.set_params(alpha=1.0 - alpha)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_lower = clf.predict(xx)

clf.set_params(loss='ls')

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_pred = clf.predict(xx)

# Plot the function, the prediction and the 97% confidence interval based on

# the MSE

fig = plt.figure(figsize=(12,8))

plt.plot(xx, f(xx), 'g:', label=r'$f(x) = x\,\sin(x)$')

plt.plot(X, y, 'b.', markersize=10, label=u'Observations')

plt.plot(xx, y_pred, 'r-', label=u'Prediction')

plt.plot(xx, y_upper, 'k-')

plt.plot(xx, y_lower, 'k-')

plt.fill(np.concatenate([xx, xx[::-1]]),

np.concatenate([y_upper, y_lower[::-1]]),

alpha=.3, fc='b', ec='None', label='97% prediction interval')

plt.title('Given 100 observation, 100 predictor point; prediction confidence is 97%', size=14)

plt.xlabel('$x$', size =12)

plt.ylabel('$f(x)$', size=12)

plt.ylim(-10, 20)

plt.legend(loc='upper right')

plt.show()

# comment # Do shift + enter

# comment : wait to see the output

# comment : Use the f(x) = x * np.sin(x) equation to approximate the normal operation of the apparatus.

# comment : Below the GradientBoostingRegressor(max_depth=6).

# comment : what will happen if I increase my predictor sample point from 100 to 1000 point. Next iteration

# comment : Given: I have 100 sample points from sensor measurement. Use the f(x) = x * np.sin(x) equation to approximate

# the normal operation of the apparatus. Using 1000 sample estimator point and 97% confidence interval

# Author : Apolinario (Sam) Ortega - founder of invbat.com-A.I + chatbot <admin@invbat.com)

# Date created: 6/13/2020

# license : BSD 3 clause

# Citation: scikit-learn.org sample gallery

print(__doc__)

print('')

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import GradientBoostingRegressor

np.random.seed(1)

def f(x):

"""The function to predict."""

return x * np.sin(x)

#----------------------------------------------------------------------

# First the noiseless case

# sample point low = 0, high = 10 , sample data points = 100

X = np.atleast_2d(np.random.uniform(0, 10.0, size=100)).T

X = X.astype(np.float32)

# Observations

y = f(X).ravel()

dy = 1.5 + 1.0 * np.random.random(y.shape)

noise = np.random.normal(0, dy)

y += noise

y = y.astype(np.float32)

# Mesh the input space for evaluations of the real function, the prediction and

# its MSE

# sample point low = 0, high = 10 , sample data points use by predictor equation = 1000

xx = np.atleast_2d(np.linspace(0, 10, 1000)).T

xx = xx.astype(np.float32)

alpha = 0.97

# comment : previously the max_depth setting = 3, I changed it to 6 to see the effect on prediction interval

clf = GradientBoostingRegressor(loss='quantile', alpha=alpha,

n_estimators=250, max_depth=6,

learning_rate=.1, min_samples_leaf=9,

min_samples_split=9)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_upper = clf.predict(xx)

clf.set_params(alpha=1.0 - alpha)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_lower = clf.predict(xx)

clf.set_params(loss='ls')

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_pred = clf.predict(xx)

# Plot the function, the prediction and the 97% confidence interval based on

# the MSE

fig = plt.figure(figsize=(12,8))

plt.plot(xx, f(xx), 'g:', label=r'$f(x) = x\,\sin(x)$')

plt.plot(X, y, 'b.', markersize=10, label=u'Observations')

plt.plot(xx, y_pred, 'r-', label=u'Prediction')

plt.plot(xx, y_upper, 'k-')

plt.plot(xx, y_lower, 'k-')

plt.fill(np.concatenate([xx, xx[::-1]]),

np.concatenate([y_upper, y_lower[::-1]]),

alpha=.3, fc='b', ec='None', label='97% prediction interval')

plt.title('Given 100 observation, 1000 predictor point; prediction confidence is 97%', size=14)

plt.xlabel('$x$', size =12)

plt.ylabel('$f(x)$', size=12)

plt.ylim(-10, 20)

plt.legend(loc='upper right')

plt.show()

# comment # Do shift + enter

# comment : wait to see the output

# comment : Use the f(x) = x * np.sin(x) equation to approximate the normal operation of the apparatus.

# comment : Below the GradientBoostingRegressor(max_depth=6) and I used 1000 predictor point

# comment : what will happen if I lower my prediction interval to 90% since my apparatus has +/- 10% tolerance.

# Next iteration

# comment : Given: I have 100 sample points from sensor measurement. Use the f(x) = x * np.sin(x) equation to approximate

# the normal operation of the apparatus. Using 1000 sample estimator point, max_depth = 6 , and 90% confidence interval

# Author : Apolinario (Sam) Ortega - founder of invbat.com-A.I + chatbot <admin@invbat.com)

# Date created: 6/13/2020

# license : BSD 3 clause

# Citation: scikit-learn.org sample gallery

print(__doc__)

print('')

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import GradientBoostingRegressor

np.random.seed(1)

def f(x):

"""The function to predict."""

return x * np.sin(x)

#----------------------------------------------------------------------

# First the noiseless case

# sample point low = 0, high = 10 , sample data points = 100

X = np.atleast_2d(np.random.uniform(0, 10.0, size=100)).T

X = X.astype(np.float32)

# Observations

y = f(X).ravel()

dy = 1.5 + 1.0 * np.random.random(y.shape)

noise = np.random.normal(0, dy)

y += noise

y = y.astype(np.float32)

# Mesh the input space for evaluations of the real function, the prediction and

# its MSE

# sample point low = 0, high = 10 , sample data points use by predictor equation = 1000

xx = np.atleast_2d(np.linspace(0, 10, 1000)).T

xx = xx.astype(np.float32)

alpha = 0.90

# comment : previously the max_depth setting = 3, I changed it to 6 to see the effect on prediction interval

clf = GradientBoostingRegressor(loss='quantile', alpha=alpha,

n_estimators=250, max_depth=6,

learning_rate=.1, min_samples_leaf=9,

min_samples_split=9)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_upper = clf.predict(xx)

clf.set_params(alpha=1.0 - alpha)

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_lower = clf.predict(xx)

clf.set_params(loss='ls')

clf.fit(X, y)

# Make the prediction on the meshed x-axis

y_pred = clf.predict(xx)

# Plot the function, the prediction and the 97% confidence interval based on

# the MSE

fig = plt.figure(figsize=(12,8))

plt.plot(xx, f(xx), 'g:', label=r'$f(x) = x\,\sin(x)$')

plt.plot(X, y, 'b.', markersize=10, label=u'Observations')

plt.plot(xx, y_pred, 'r-', label=u'Prediction')

plt.plot(xx, y_upper, 'k-')

plt.plot(xx, y_lower, 'k-')

plt.fill(np.concatenate([xx, xx[::-1]]),

np.concatenate([y_upper, y_lower[::-1]]),

alpha=.3, fc='b', ec='None', label='90% prediction interval')

plt.title('Given 100 observation, 1000 predictor point; prediction confidence is 90%', size=14)

plt.xlabel('$x$', size =12)

plt.ylabel('$f(x)$', size=12)

plt.ylim(-10, 20)

plt.legend(loc='upper right')

plt.show()

# comment # Do shift + enter

# comment : wait to see the output

# comment : Use the f(x) = x * np.sin(x) equation to approximate the normal operation of the apparatus.

# comment : Below the GradientBoostingRegressor(max_depth=6) and I used 1000 predictor point

# comment : what will happen if I lower my prediction interval to 90% since my apparatus has +/- 10% tolerance.

# Next iteration

INVBAT.COM - A.I. is a disruptive innovation in computing and web search technology. For example scientific calculator help us speed up calculation but we still need to remember accurately the formula and the correct sequence of data entry. Here comes the disruptive innovation from INVBAT.COM-A.I. , today the problem of remembering formula and the correct sequence of data entry is now solved by combining formula and calculation and make it on demand using smartphone, tablet, notebook, Chromebook, laptop, desktop, school smartboard and company big screen tv in conference room with internet connection.

For web search , INVBAT.COM-A.I, is demonstrating that you can type text or use voice to text A.I. to search the web and get direct answer in one or two clicks. You don't need to waste your time looking from million of search results.